Lab 3 – Is It Normal?

代写r语言 You’ll notice that the median and the mean of this distribution are very similar, which is typical for a normal distribution.

Introduction

This lab will introduce you to your first of many statistical tests, the Shapiro-Wilk test for normality. All this tests asks is the likelihood of something coming from a population with a normal distribution or, if you prefer, “is it normal?” First this lab will walk you through how to make test data sets so that you can see what happens when you run a Shapiro-Wilk test on data that you know is normal. Then, you will use real data and determine if the salaries at the University of Arizona are normally distributed.

Learning Outcomes 代写r语言

By the end of today’s lab you should be able to:

- Identify an obviously non-normal distribution using a histogram

- Use rnorm()to make up fake distribution data

- Use test()to evaluate the likelihood a sample came from a normal distribution

- Use mean(), sd(), median(), max()and min() to summarize data

- Describe what it says about a distribution when the mean and median are very different

- Use sample()to take a sub-sample of a large data set

Part 1: Randomized Data 代写r语言

Sometimes it’s easier to understand statistics when you practice them using made up data sets where you know what the answer should be – sort of like using a walkthrough with an answer key in front of you. Making randomized data is like making your own key, because you know what the answer should be. Today we will be using a test to determine if a sample came from a normal distribution, so our first step is to make up data that we know is normally distributed and see how the tests performs on that data.

Part 1.1 Make the Data

There are many functions in R that make different data distributions. The rnorm() function makes normal data, and takes the arguements of sample size (n), mean (mean), and standard deviation (sd).

If we wanted a sample (called normal) of 50 values from a normal distribution with a mean of 25 and a standard deviation of 2, we’d write:

normal <- rnorm(n = 50, mean = 25, sd = 2)

Part 1.2 Test The Data

This R object isn’t a data frame like we’ve used in previousl labs. Instead, it is a vector. If we want to see how the code worked using a histogram we don’t need to use $ to pull out a column. Instead, enter the object itself, like so:

hist(normal)

We can see it looks pretty normal but not perfect.

A follow-up question would be how well did it replicate the original request of a mean of 25 and a standard deviation of 2? You can use the mean() and sd() function to find out:

mean(normal) sd(normal)

Remember, this is a sample, not a population – but yours should still be pretty close to the initial mean you put in. For example, my initial mean in my rnorm() function was 25 and my standard deviation was 2 – and my mean of my sample is 24.97 and standard deviation is 2.14, which is pretty close. 代写r语言

Part 1.3 Summary Statistics

While mean and standard deviation are the most common summary statistics you will encounter, median, maximum and minimum are also very commonly used to explain the central tendency and range of a dataset. The code to run them is very simple:

median(normal) max(normal) min(normal)

You’ll notice that the median and the mean of this distribution are very similar, which is typical for a normal distribution.

Meanwhile the maximum and the minimum are very different from standard deviation. This is because the standard deviation is a measure of how far the bulk of the data is from the mean, while maximum and minimums are the furthest extent of the data itself.

QUESTION 1: Which of the following chunks of code should, statistically, yield something that is most similar to the maximum value?

A.2*sd(normal)

B. mean(normal)

C. mean(normal) +2*sd(normal)

Part 1.4 Shapiro-Wilk Test

In this class you will learn many different statistical tests, each of which asks a different question. This particular test, the Shapiro-Wilk Normality Test asks “how likely is it that this sample came from a normally distributed population?”

To run it is pretty simple – you use shapiro.test() and put your R object in the parentheses: 代写r语言

shapiro.test(normal)

What this does is return a few values:

## ## Shapiro-Wilk normality test ## ## data: normal ## W = 0.9811, p-value = 0.5991

Mine are a little different than yours because this is a random sample, but the pieces are the same:

1.data: the name of your data, in this case normal

2.W: the calculated test statistic

3.p-value: the probability of your results

W is calculated by the Shapiro-Wilk test to measure how normal or not normal your distribution is. Then, the computer takes that W test statistic and says “what’s the probability you would get a W like this from a normal distribution?”

That’s what the p-value is: a measure of how frequently the conditions of the test are true. 代写r语言

In my case, I got a p value of 0.6, which is essentially a 60% chance this came from a normal distribution (but remember yours will be slightly different because normal is randomized!)

While different people use different cut-offs, a general rule is that if the p value is less than .05 (or 5%), it’s weird. So if you run a Shapiro-Wilk test and get p < 0.05, it’s probably (but not necessarily) not from a normal distribution.

Part 2 – Real Data 代写r语言

The data you will use for today’s lab comes from https://www.wildcat.arizona.edu/page/university-of-arizona-salary-database-fy2020, which has compiled the salaries of all of the staff at University of Arizona for fiscal year 2020. I have done some data cleaning and added a few extra columns, and uploaded this data set as a csv file to D2L.

Part 2.1 Load the Data

Download the dataset Faculty Salaries FY 2020.csv from D2L. Import your dataset into R using the steps we learned in Labs 1 and 2, and remember to call it something short and easy to spell like “salary” or “fs.” In my case, I’ve called it fs.

fs <- read.csv(“C:/Users/Meaghan/Dropbox/Science And School/Courses – Taught/ISTA 116/2020 Labs/Lab 3 – Normal Distributions/Faculty Salaries FY 2020.csv”)

Part 2.2 Look at the Data

Use the hist() function to evaluate the data (Lab 2 can help you with directions). You are specifically looking at the column called Annual.at.Full.FTE. You’ll notice it doesn’t really look much like a normal distribution. You might want to use the xlim arguement to look in finer detail (see Lab 2).

QUESTION 2: What makes this data look like it is not likely to be a normal distribution? Use hist() and the xlim or breaks arguments to evaluate each of the answers below and select what is the most accurate description of this data:

Most of the data is below 150,000 but there are a few individuals that make more, up to several million a year

Most of the data is below 500,000 but there are a few individuals that make more, up to several million a year

Almost everyone is making 250,000 dollars a year but there are a few individuals that make more, up to several million a year

Part 2.2 Summarize the Data

Use the mean(), median(), sd(), max() and min() functions to pull out summary statistics for the annual salary at full FTE.

QUESTION 3: What is the mean of this distribution?

QUESTION 4: What is the median of this distribution?

QUESTION 5: What is the standard deviation of this distribution?

QUESTION 6: What is the minimum of this distribution?

QUESTION 7: What is the maximum of this distribution?

QUESTION 8: The mean and the median of this data are pretty different. That is a good indication that:

The data is normally distributed 代写r语言

The data is not normally distributed

The mean is a better measure of central tendency for this data

Something was wrong with your math

Part 2.3 Shapiro Test

So if you try to run a shapiro test, you will run into an error. Try it, using the following code:

shapiro.test(fs$Annual.at.Full.FTE)

Like many tests, the Shapiro-Wilk test has certain restrictions. You can’t run it on a sample size that is too small, and you can’t run it on a sample size that is too large. In this case, you need between 3 and 5000 samples.

QUESTION 9: Look at the dataset in the Environment window. How many observations are there?

One way of getting around this is to take a smaller sample of your much larger dataset. You can do this using the sample code, specifying that you want to take a sample of (x) and how big that sample should be (size):

n <- sample(x=fs$Annual.at.Full.FTE, size=4999)

Now I have a random sample of 4999 values of the Full FTE salary. Because it is random, your samples will be slightly different from mine, and if you re-run the code you will get different samples over and over and over again. But now that I have my random smaller sub-sample, I can run a Shapiro-Wilk test:

shapiro.test(n)

NOTE: Because n is a value string, you don’t need to use the $ in this code! Just enter the r object.

QUESTION 10: Take a random sample of 4999 values and run a shapiro test on it. Is your random sample of salary data normally distributed?

Yes, my p value is less than .05 meaning the data is likely from a normally distributed population 代写r语言

No, my p value is less than .05 meaning there is a less than 5% probability of this sample coming from a normally distributed population

QUESTION 11: Is there a chance that someone in this class will get a p value of more than .05 for their subsample in this class?

Yes, but it is unlikely because the sample size is so small compared to the original highly skewed dataset

Yes, but it is unlikely because of how large the sample size is, which captures much of the original highly skewed dataset

No, there is no chance because the data is so skewed

There is a column in this dataset called NewSalary. This is the salary for each individual after the 2020 faculty furlough program. Because the faculty furlough is based off of different percentages for each salary (see the PercentPayCut column), it should apply inequally throughout the salary distribution – people who make more have a larger paycut, while people who make less have a smaller one.

QUESTION 12: Should differing percentages make a distribution become closer to a normal distribution, or further away from a normal distribution?

A.Because the long righthand-skew is being reduced, it should become more asymmetrical (non-normal) B. Because the long righthand-skew is being reduced, it should become more like a normal distribution C. Because the paycut impacts all data points, it should stay the same but shifted to the left

Use this or another adjusted histogram code to check whether the new salaries are more or less like a normal distribution:

par(mfrow = c(2,1)) hist(fs$Annual.at.Full.FTE, xlim = c(0, 500000), breaks = 250, main = "Original Salary", xlab = "Annual Salary, Cut off at 500,000", border = "red") abline(v = median(fs$Annual.at.Actual.FTE), col = "red", lwd = 2) abline(v = median(fs$NewSalary), col = "blue") hist(fs$NewSalary, xlim = c(0, 500000), breaks = 250, main = "New Salary", xlab = "Annual Salary, Cut off at 500,000", border = "blue") abline(v = median(fs$NewSalary), col = "blue", lwd = 3) abline(v = median(fs$Annual.at.Actual.FTE), col = "red")

The point of the faculty furlough was to save money for the university, which anticipated a 250 million dollar shortfall for the 2020-2021 school year. Use the sum() function to determine how much money the University spends every year on salaries (using the Actual rather than Full salary here):

sum(fs$Annual.at.Actual.FTE)

QUESTION 13: How much money did the university save? Use the sum() function on the correct column to determine the amount of money saved.

A.108 million dollars

B.1.08 billion dollars

C.784 million dollars

D.9 million dollars

Part 2.4 Understanding Data

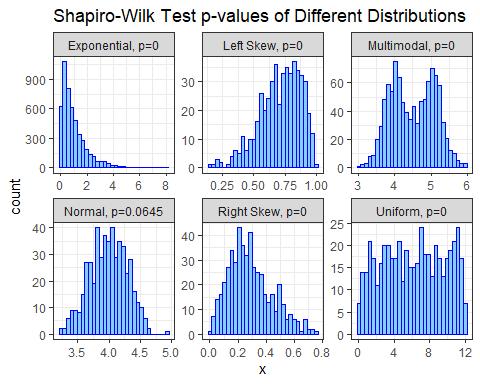

Salary analyses are an important part of many company’s decision making processes. Salary analyses can pinpoint problems like racial or gender inequity, show when company resources are going to the wrong area, and can help inform whether certain groups of people need to have a raise or not. Almost all salary studies show a non-normal distribution. In fact, if you look at individual departments almost all of them will be non-normally distributed too. Here are 6 example departments at UA:

QUESTION 14: Why is it that salary data is almost always non-normally distributed?

QUESTION 15: Take a look at the faculty salary data. What do you think the differences are between the Annual.at.Full.FTE and the Annual.at.Actual.FTE columns?

And that’s it! Please turn in your answers via the corresponding D2L quiz.

更多代写:澳大利亚代考价格 proctoru作弊 爱尔兰管理学作业代写 self-reflective report代写 Term Paper代写北美 chemistry代写

合作平台:essay代写 论文代写 写手招聘 英国留学生代写