CSC 656-01, S23

Coding Project #2

Computer Organization代写 The simplest way to produce this PDF is to use Google Docs, which lets you combine image files and text then download as a PDF.

Overview

Using the code harness provided, add instrumentation code to measure elapsed time, and implement 3 different ways of computing a sum. Grab the code harness here: git clone https://github.com/SFSU-CSC746/sum_harness_instructional.git

Build and run the codes on Perlmutter@NERSC on a CPU node.

Run the codes for different problem sizes and record the runtime.

Using the runtime data from your code runs, compute some derived performance metrics –MFLOP/s, % memory bandwidth utilized, memory latency – then create charts of this data and answer some performance analysis questions.

Deliverables

The deliverables for this project include:

- Source Code in a single zipfile or compress tarfile (no RAR files)

- A single PDF containing results of analysis: charts and answers to questions. This PDF will contain images of 3 charts and some text answers to questions. The simplest way to produce this PDF is to use Google Docs, which lets you combine image files and text then download as a PDF.

Part 0 – General Information

The code harness for this assignment is accessible via github:

git clone https://github.com/SFSU-CSC746/sum_harness_instructional.git

Please refer to the Spring 2023 NERSC Topics google doc for information about accessing Perlmutter, the NERSC software ecosystem, transferring files to/from NERSC, and building/running jobs on Perlmutter CPU nodes.

Reference material:

- Perlmutter system overview and architectural specifications

Computing various metrics:

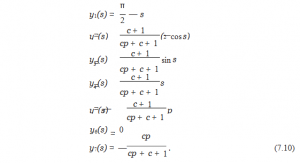

- MFLOP/s = ops/time, where

○ ops = number of operations/1M Computer Organization代写

○ time = runtime(sec)

- % of memory bandwidth utilized = (bytes/time) / (capacity), where○ bytes = number of memory bytes accessed by your program

○ time = runtime of your program (secs)

○ capacity = theoretical peak memory bandwidth of the system

- Avg memory latency = time/accesses, where

○ time = runtime(sec)

○ accesses = number of program memory accesses

Part 1 – Direct Sum Computer Organization代写

Do your own implementation of the “direct sum” method of computing a sum. You will need to provide an implementation inside the sum() function in the sum_direct.cpp file in the code harness.

You will need to add timer instrumentation to the benchmark.cpp file to measure and report elapsed time. Refer to the chrono_timer code distribution for more details. Note: you need to do this task only once and the instrumentation code will be compiled in to the 3 different executables.

On a Perlmutter CPU compute node, run at varying problem sizes, record run time from each problem size in a text file or spreadsheet.

For each problem size, compute MFLOP/s from runtime and number of operations

For each problem size, compute the % of memory bandwidth your code utilizes

For each problem size, compute the estimated memory latency

Part 2 – Vector Sum

Do your own implementation of the “vector sum” method of computing a sum. You will need to provide an implementation inside both the setup() and sum() functions in the sum_vector.cpp file in the code harness. Here, setup() consists of initializing an array of length N to contain the values 0..N-1.

Be sure you’ve completed instrumenting benchmark.cpp to measure elapsed time (see above).

On a Perlmutter CPU compute node, run at varying problem sizes, record run time from each problem size in a text file or spreadsheet.

For each problem size, compute MFLOP/s from runtime and number of operations

For each problem size, compute the % of memory bandwidth your code utilizes

For each problem size, compute the estimated memory latency

Part 3 – Indirect Sum Computer Organization代写

Do your own implementation of the “indirect sum” method of computing a sum. You will need to provide an implementation inside both the setup() and sum() functions in the sum_indirect.cpp file in the code harness. Here, setup() consists of initializing an array of length N to contain random numbers in the range 0..N-1 (hint: use lrand48() % N).

Be sure you’ve completed instrumenting benchmark.cpp to measure elapsed time (see above).

On a Perlmutter compute node, run at varying problem sizes, record run time from each problem size in a text file or spreadsheet.

For each problem size, compute MFLOP/s from runtime and number of operations

For each problem size, compute the % of memory bandwidth your code utilizes

For each problem size, compute the estimated memory latency

Part 4 – Analyzing Results

From each of the codes in Parts 1 – 3, you now have the following three datasets:

- MFLOP/s at each problem size

- % of memory bandwidth utilized at each problem size

- Memory latency at each problem size

Create plots of each of these datasets using the python script provided in the code harness.Note that some modification will be required to the python script to adjust titles, etc. Using the interface on the matplotlib plot display window, save PNGs or PDFs of these plots. Note: your submission will be marked down if your charts do not have correct titles, axis annotations,legends, etc.

MFLOP/s: Create a 3-variable chart showing problem size vs. MFLOP/s, use the python script included in the code harness, some modification may be required

Memory bandwidth: Create a 3-variable chart showing problem size vs. % peak memory bandwidth utilized, use the python script included in the code harness, some modification may be required Computer Organization代写

Memory latency: Create a 3-variable chart showing problem size vs. memory latency, use the python script included in the code harness, some modification may be required

Analysis questions. Please provide a brief (2-3 sentences maximum) answer to each of the following questions about the performance of your codes. Please make use of the concepts we discuss in class and in the P&H textbook for full credit: what types of operations are more expensive and why, and which of the codes is performing a larger number of more expensive operations?Computational rate. Which of the 3 methods has the best computational rate (MFLOP/s)? Why?

Memory bandwidth usage. Of the 2 methods vector sum and indirect sum, which has higher levels of memory bandwidth utilization? Why?

Memory latency. Of the 2 methods vector sum and indirect sum, which shows lower levels of memory latency? Why?

Important Dates

- Submissions open: Thu 15 Mar 2023

- Submissions due: Thu 6 Apr 2023 23:59 PDT

- Submissions close: Sun 9 Apr 2023 23:59 PDT

Grading

This assignment is worth 100 points (counts for about 1/8th of your total grade)

Late Submissions

Per the CSC 656 Syllabus:

Advice: do not wait until the “last minute” to get started on homeworks. Homeworks can require a significant amount of effort, and it is inevitable that unexpected things happen that will slow you down.

- Late submissions will be subject to a 5%-per-day penalty assessment: 0-1 days late, 5% deduction; 1-2 days late, 10% deduction; 2-3 days late, 15% deduction, etc.

- Submissions are not accepted more than 3 days late, except in unusual cases that are(1) outside the students control (e.g., medical) and that (2) can be verified with objective documentation (e.g, such as a doctor’s note, but preferably a formal accommodation from the DPRC office)

更多代写:国外作业代写 托福家考作弊 宏观经济北美代考 工程专业essay代写 管理学论文代写 如何取得论文高分

合作平台:essay代写 论文代写 写手招聘 英国留学生代写